Description

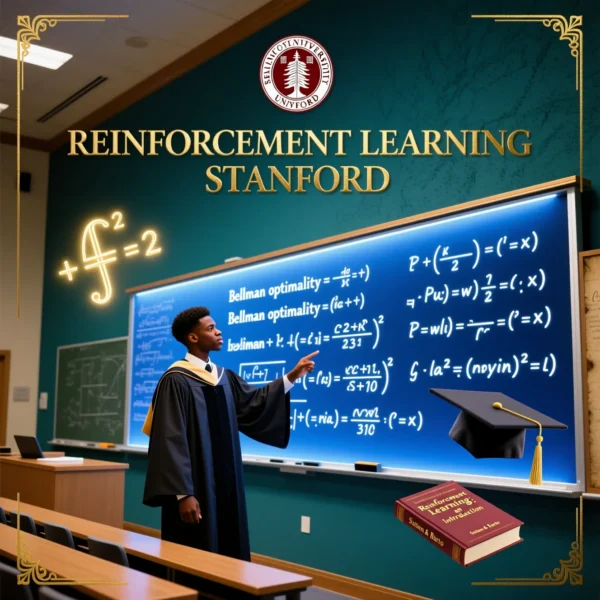

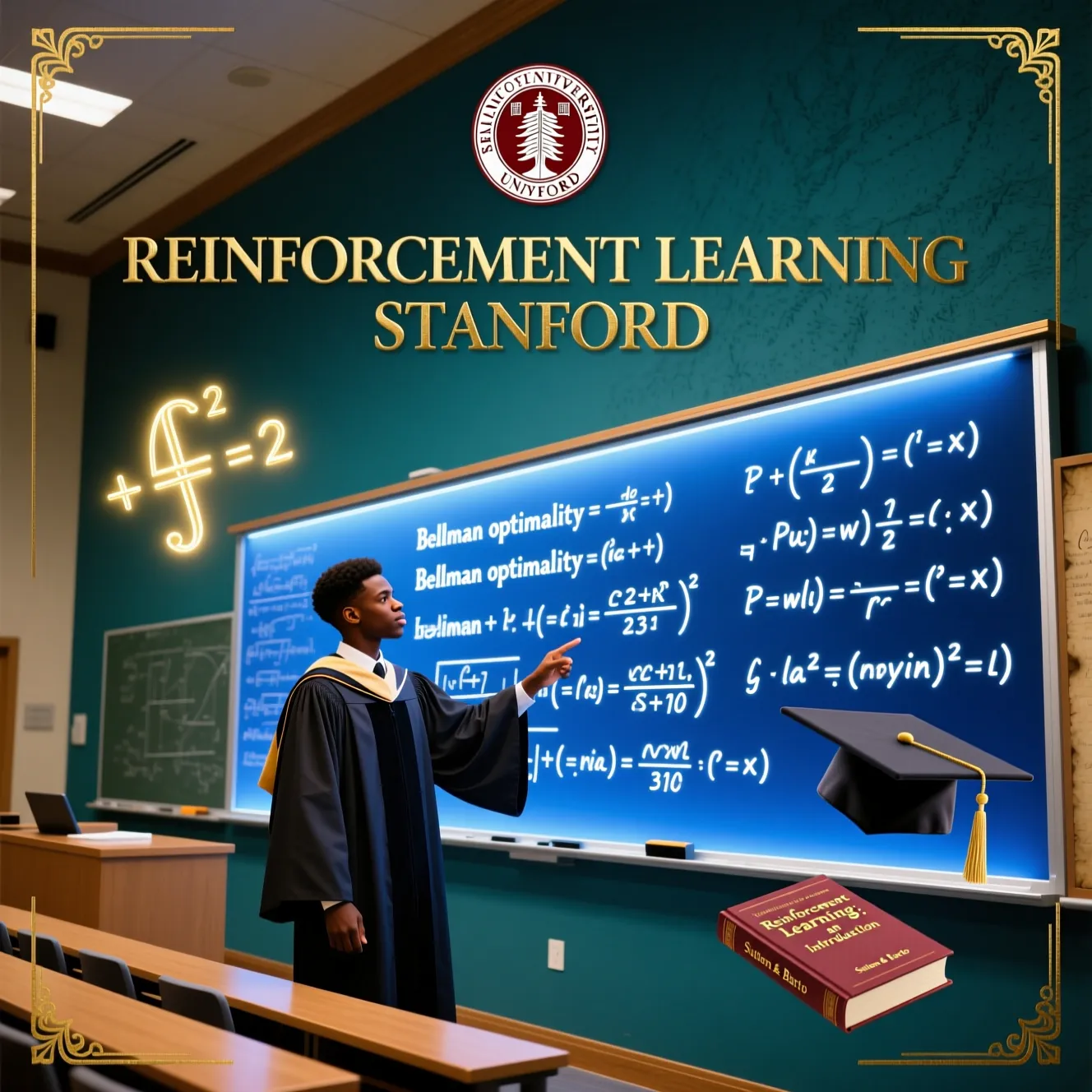

Reinforcement Learning: Stanford University’s Graduate Curriculum

This course captures the depth and rigor of Stanford’s graduate-level reinforcement learning class—taught by leading researchers in the field. You’ll go beyond tutorials to master the **mathematical foundations**, **algorithmic design**, and **practical implementation** of modern RL systems used in robotics, finance, and AI research.

What You’ll Master

- Dynamic programming—value iteration, policy iteration, modified policy iteration

- Monte Carlo & Temporal Difference methods—on-policy vs off-policy, TD(λ)

- Function approximation—linear methods, neural networks, convergence guarantees

- Policy gradient theorems—REINFORCE, natural gradients, TRPO intuition

- Exploration theory—UCB, Thompson sampling, information-directed sampling

Projects & Assignments

- Solve Gridworld with dynamic programming

- Implement TD Control on the Racetrack environment

- Build a linear value approximator for MountainCar

- Derive and code the policy gradient theorem from first principles

Why Stanford’s Approach?

- Rigorous, not rushed—you’ll understand *why* algorithms work, not just how to call them

- Balances theory and code—proofs paired with Python implementations

- Prepares you for research—covers topics from Sutton & Barto and beyond

Who Should Take This?

- Graduate students in CS, AI, or robotics

- ML engineers aiming for RL specialist roles

- Researchers needing a structured, university-grade reference

- Serious practitioners tired of superficial tutorials

From Intuition to Proof to Code

This course doesn’t just teach reinforcement learning—it teaches you to **think like an RL scientist**.

Ready to master RL the Stanford way? Enroll now.

![Cutting-Edge AI Deep Reinforcement Learning in Python [FTU]](https://dloadables.com/wp-content/uploads/2025/11/cutting-edge-rl-600x600.webp)